HearThere

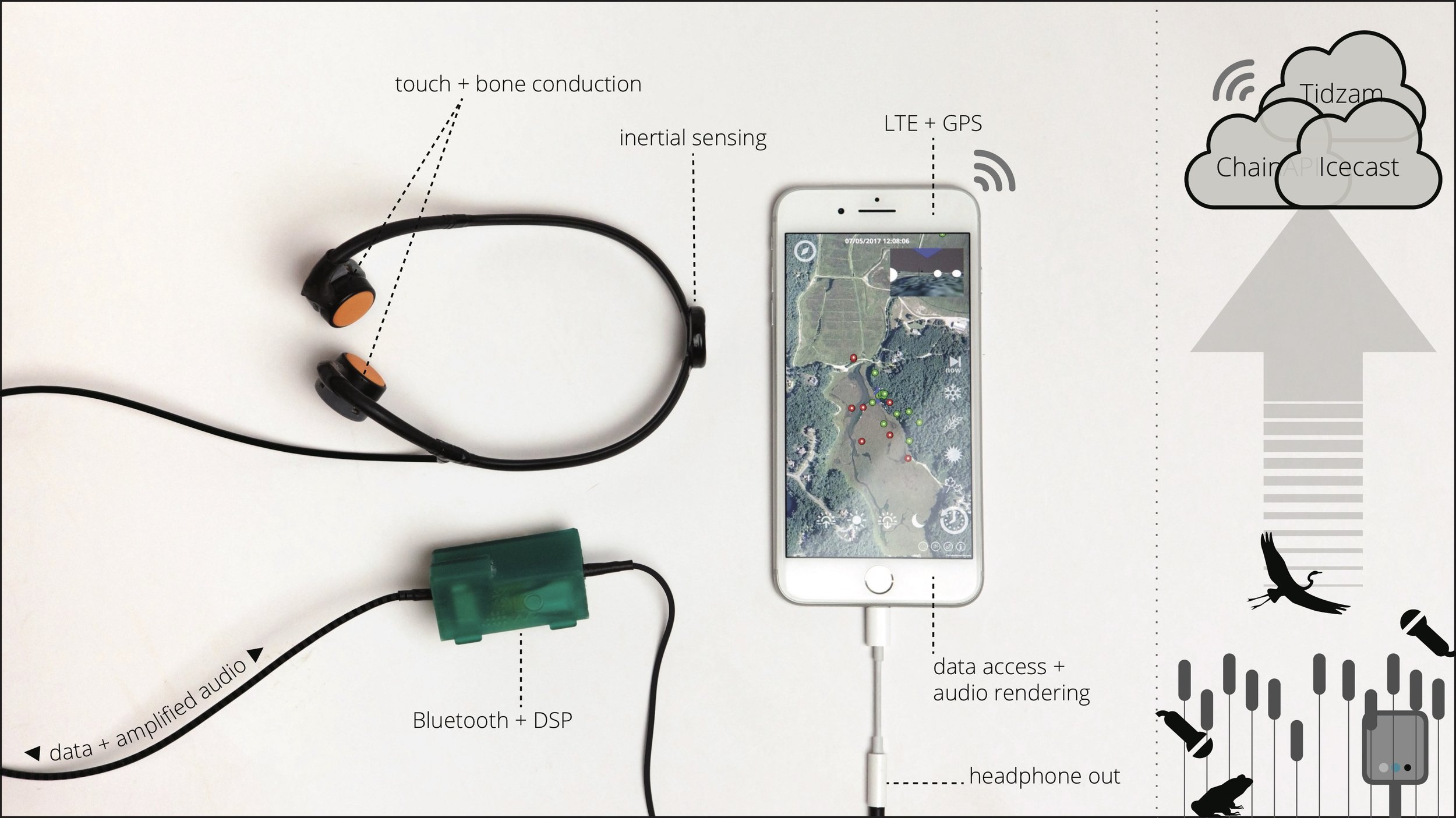

HearThere is a smart headphone device that extends perception and amplifies attention, connecting its wearer to networks of distributed sensors in the surrounding environment. Using non-occluding (bone conducting) transducers and combined on-board/on-body sensing, HearThere produces a digital layer of auditory perception that blurs together with the wearer’s natural hearing. The device supports both auditory augmented reality and sensory augmentation research and applications. Factors such as stillness, as well as optional EEG and eye tracking sensors are used to infer the wearer’s listening state and adjust the presented sound accordingly.

The research platform was originally designed to be used at the Tidmarsh Wildlife Sanctuary, a publicly-accessible restored wetland in southern Massachusetts instrumented with hundreds of real-time environmental sensor nodes and dozens of live-streaming microphones. Visitors wearing HearThere can hear through the microphones in their surroundings, producing an experience users have alternately described as a “sensory superpower” and a “tool for exploring a landscape.” Simply stopping to listen turns up the world so slowly and subtly as to be almost imperceptible. When a user fixates on a bird’s nest in a distant tree, HearThere amplifies their focus by raising the levels of the microphones there, while still preserving spatial and distance cues.

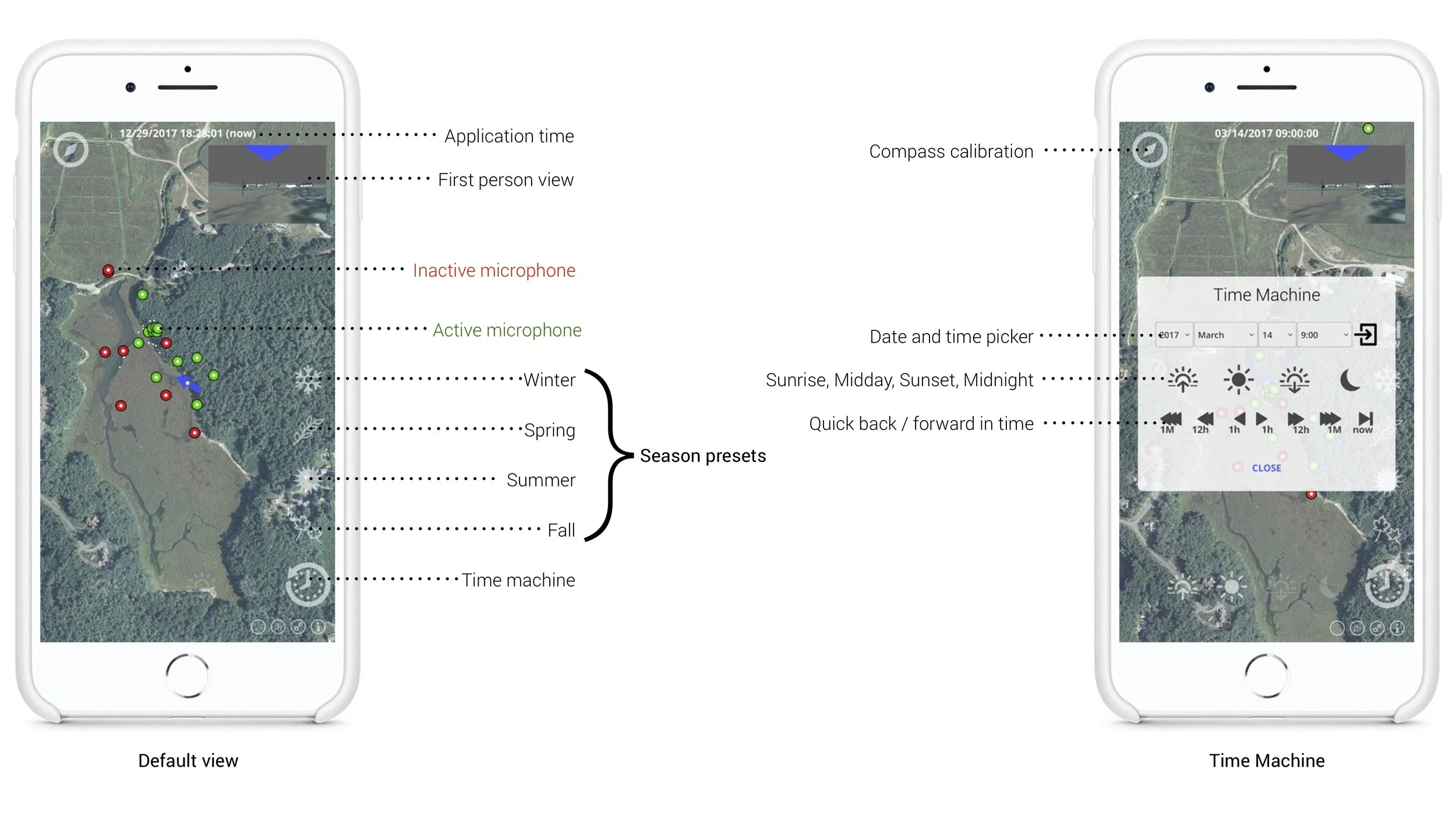

HearThere’s accompanying mobile application, called Sensorium, allows users to switch between real-time streams and a huge database of recorded audio—to experience an amplified present or to walk through the sound of the morning, the last season, and anytime in the years past. Easily configurable in the Unity development environment, Sensorium allows designers to place live streaming and static audio sources using real-world coordinates, and embeds SensorChimes, a extension to Pure Data (Pd) that streamlines the creation of sonification and generative composition. At the Tidmarsh site, a number of user-selectable generative musical pieces provide extend the listener’s ambient awareness through sensors all around them. A flexible weighting system for audio sources allows external applications to influence the mix; at Tidmarsh, output from a real-time AI wildlife classification system is used to tilt the mix towards wildlife of interest.

HearThere's R&D was supported by Living Observatory, Inc. and the members of the MIT Media Lab, with user testing facilitated by the Massachusetts Audubon Society and its Tidmarsh Wildlife Sanctuary.